The Ai Teaming Gap

AI is making individuals faster and groups messier. We have adopted it for individual productivity, but we haven’t solved for what happens when we bring it into a group.

The conversation about AI in organisations tends to run in two directions. One is about what it can do for the individual: faster research, better drafts, sharper analysis. The other is about what it means for the organisation at the system level: governance, risk, policy, ethics. Both conversations matter. But there is a third conversation that most organisations have not yet had and it may be the most impactful one.

What happens when a group of AI-enabled people sit down to work together? Not in parallel, each in their own workflow, but as a team trying to reach a shared output, make a collective decision, or solve a complex problem together? The answer, based on a growing body of evidence, is that things do not go as well as most leaders assume. Individual capability expands but collective capability does not automatically follow.

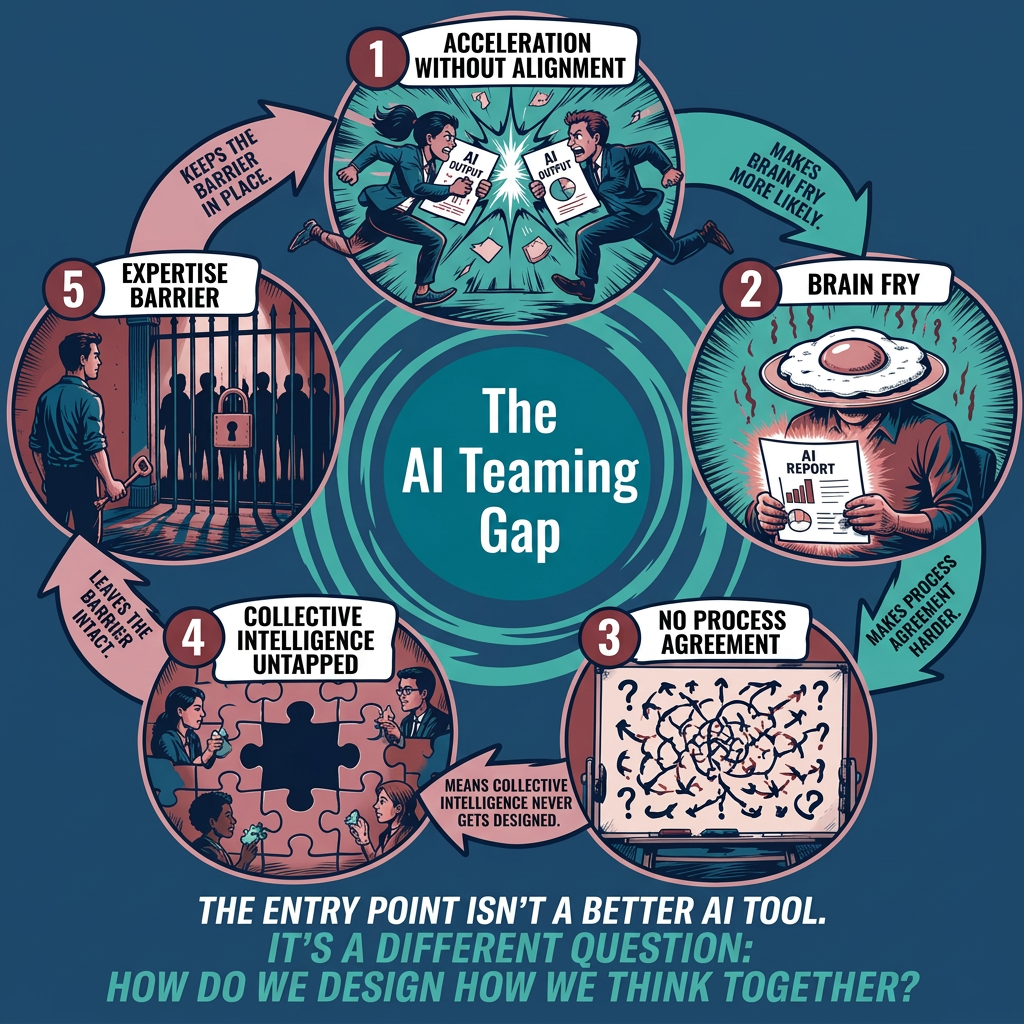

This is what might be called the AI teaming gap: the space between what AI enables for individuals and what it is actually delivering for teams. It is not a technology problem. It is a design problem, a process problem and in some respects a strategic problem. And it is one that organisations who are serious about making strategy everyone’s business need to address directly.

There are five interconnected reasons why this gap exists and why it matters. They are not independent failures. They compound each other. And until organisations name them clearly, they will keep spending on AI capability while the return on that investment stalls at the individual level and fails to deliver a collective organisational return.

1. Acceleration without alignment

AI is boosting individual acceleration while quietly fracturing the group’s ability to reach a shared output.

This is something I have been observing in the teams I work with. The productivity gains at the individual level are not in question. One person can now generate more content, faster and at greater depth than was possible even two years ago. The analysis lands quicker. The draft is ready before the meeting. The research is already done. What that individual brings to a group session is more and better prepared than before. But this is precisely where the problem starts. Put several AI-accelerated individuals in a room and you do not get a faster group. You get competing outputs, parallel analyses and divergent summaries, with no shared process for cutting through to the ‘so what’.

A longitudinal study tracking teams between 2023 and 2025 found that while AI tools became genuinely useful for individual productivity, their impact on teamwork remained limited. The researchers called specifically for approaches that scaffold group awareness and shared understanding, not just individual output. Asana’s AI Super Productivity Paradox report found that individual gains are real but are being consumed by coordination costs and rework loops before they reach any meaningful organisational outcome. Volume multiplies. Clarity does not. The gap between what each person brings and what the group can land together is widening, not narrowing.

2. Concentrated cognitive load is driving ‘brain fry’

Heavy AI use is producing a new kind of cognitive overload concentrated to individuals, akin to drinking in information ‘from a fire hose’. Efficient AI teaming can distribute load across the group.

I hear people talking about this phenomenon in different ways. This is not the familiar overload of too much work. It is something more specific. When we are using AI, the answer comes back before the question has been fully formed. The report lands, polished and comprehensive, before the person has gone through the hard yards of forming the thinking themselves. A growing body of observation around what is being called ‘brain fry’ suggests that people who work intensively with AI are experiencing a distinct kind of cognitive fog: they have the output but not the thinking behind it. And over time, that produces an odd cognitive irritability and erodes confidence. People begin to lose faith in their own ability to generate ideas, work through problems, or craft something from scratch without the tool.

The implications for groups are significant. When several people arrive at a collective session each holding AI-generated outputs they did not fully think through themselves, the room is full of conclusions without reasoning. The debate that would normally surface assumptions, stress-test logic and build shared understanding does not happen, because everyone already has an ‘answer’.

Research from BetterUp Labs and Stanford, published in the Harvard Business Review in February 2026, gave this a name: ‘workslop’. The researchers found that workers using AI too readily produce polished but shallow outputs that lack the depth and context to move projects forward, creating a hidden tax as colleagues then spend time deciphering, correcting or redoing AI-generated work. Their survey of more than 1,100 employees found this hidden tax is costing large organisations millions of dollars each year.

One of the most compelling arguments for deliberate AI teaming follows directly from this: when the intellectual work is distributed across a group by design, with different people prompting, synthesising and sense-making across different parts of a challenge, no single person absorbs the full firehose. The hard yards of thinking get shared rather than outsourced, individual brain fry is reduced or eliminated and the quality of the collective output reflects that.

3. Lack of process agreement

The multiplier effect of AI kicks in when a group has agreed, in advance, on how to use it together.

Most organisations have developed guidance on AI at the policy level: what tools are approved, what data can be used, what the risk parameters are. Far fewer have had the conversation one level down, at the team level, about how a group will actually work with AI when they are trying to think together. Who prompts? Who synthesises? How does the group move from a set of individually generated outputs to shared insight? Without that agreement in place, AI use in a group looks like collaboration but potentially isn’t. Each person runs their own process, arrives with their own outputs and the group spends its time reconciling conclusions rather than building them together.

The groups getting genuine value from AI are not necessarily using better tools. They have simply agreed on a process for moving from individual outputs to collective clarity, and then to decisions that drive real impact. A landmark P&G field experiment, led by researchers from Harvard Business School and Wharton across 776 professionals, found that AI-augmented cross-functional groups were three times more likely to produce breakthrough ideas, but only when deployed with a shared agreement on how to use it. Process agreement is not an administrative detail. It is the precondition for the multiplier effect of using AI in the first place.

4. Lost opportunity of harnessing collective intelligence

The organisations that get genuine traction with AI are not the ones with the best tools; they are the ones who have deliberately designed how they think together.

The field of open strategy has been making a version of this argument for years: that organisations systematically underuse the collective intelligence already available to them by keeping strategic thinking in too few hands. What the AI teaming evidence adds is urgency and a new set of stakes. The coordination cost data and the brain fry observations, taken together, describe organisations that are amplifying individual capacity while hollowing out collective capability. That is the opposite of what good strategy requires. The organisations that recognise this have moved past the question of AI adoption. They are redesigning collaboration itself, with AI as a deliberate part of how the group thinks, not just how each individual works.

Two concepts described by Lynne Cazaly, an expert in facilitating groups, are particularly useful here. The first is collective sense-making: how a group moves from individual inputs to shared understanding. In every strategy session I’ve run, this is the moment that decides whether the work sticks. A room full of smart people can sit for two hours and leave with four different versions of what was agreed. Collective sense-making is the discipline that closes that gap.

The second is assemblage: the ability to visually pull together key insights from a high volume of diverse sources so a group can actually act on them. Both skills are about building the kind of adaptability that comes from combining human creativity with AI to improve how problems are solved and work is designed together. Ethan Mollick, one of the researchers behind the P&G study and among the most respected voices globally on generative AI, argues that this demands a more fundamental rethink than most organisations are currently having. The question is not which tools to adopt. It is how to redesign collaboration itself.

Redesigning collaboration is not an abstract problem. It has a practical shape: who gets invited into strategy work, when, and why. Open strategy is my answer to that question and it’s one I’ve been testing and refining with real teams across public, private, and not-for-profit sectors.

5. AI is dismantling the expertise barrier to participation

When AI can bring diverse perspectives and specialist expertise into genuine conversation across a group, the case for confining strategic thinking to a small expert group doesn’t just weaken; it becomes a choice, and not a particularly well-reasoned one.

One of the most significant findings from the P&G study was not about speed or output volume. It was about what happened to functional silos. Without AI, Research & Development professionals defaulted to technical solutions and commercial professionals defaulted to market-oriented ones. With AI, and a shared process for using it, both groups produced more integrated, hybrid thinking. The functional boundaries that typically hinder collaborative work were far less present in the AI-augmented teams. Different departments stopped defaulting to their own expertise and started building genuinely cross-functional thinking together.

This has direct implications for how organisations think about who gets to be in the room for strategic work. The longstanding case for keeping strategic thinking confined to a small expert group rests partly on the assumption that broader participation means lower quality input. When AI can bring a wider group up to speed on complex problems, surface relevant evidence and help people contribute across disciplinary boundaries, that assumption weakens considerably. AI democratises contribution across functional and hierarchical boundaries. Frontline managers and broader stakeholder groups can now contribute meaningfully to analysis and problem-solving that was previously the domain of specialist knowledge. That is not just an efficiency opportunity. It is a targeted and clear invitation to open strategy.

The technology is ready. The question is whether the organisational thinking is catching up.

The AI teaming gap is not a technology problem. Organisations that keep treating it as one will keep getting the same result: more individual capability, more competing outputs, more coordination cost and less collective clarity than the investment deserves. The five pressures described here compound each other. Acceleration without alignment makes brain fry more likely. Brain fry makes process agreement harder to reach. The absence of process agreement means collective intelligence never gets designed.

None of this is inevitable. The organisations getting genuine traction with AI teaming have started with a different question. Not ‘which tools should we use?’ but ‘how do we design how we think together?’ That question is the entry point to AI teaming, and it is also the entry point to open strategy. Both approaches centre on the opportunity to build collective intelligence, by design, rather than leaving it to chance.

The organisations that answer the challenge of how to think together will not just be more productive. They will be more adaptive, more aligned and better equipped for the complexity that strategy actually demands. The decision to deliberately design for collective intelligence with AI is the best one you could be making within your team this year.

Forwarded by a colleague? Get these strategy insights in your inbox every month. Subscribe here;

Ready to book a complimentary chat?